2015 was a pilot year for Crab Team monitoring. This is the second of two post in which, Natalie White, an undergraduate in the UW Program on the Environment Capstone Program, shares her work to understand the volunteer experience during our pilot year. In a third post, we’ll fill you in on how we’ve responded to this information, and what we learned by listening.

In the previous post, I told you a little bit about my project and what steps I took to evaluate the program from a volunteer’s perspective. Now, I’m going to tell you what I found out.

Let’s start with the feedback from the initial training workshops. Based on answers to the identification questions, we learned that Crab Team staff needs to spend more time exploring crab identification with volunteers. Half an hour just isn’t enough time to learn about all the critters volunteers might befriend out there (plus, that’s the fun part!). Several contextual questions, which asked volunteers to recall ecological information covered in the training, presented challenges and for most of the questions, roughly 50% of volunteers provided the correct answer. Once again, this means more time is needed to cover the broader context of the program and the specific details of the protocol, and also indicates that Crab Team staff should make sure to be consistent between training workshops – hitting their mark every time. Volunteers appreciated the practical demonstrations of how to set traps and how to measure crabs, as well as the breadth of information covered, while also noting the need for more time to more fully benefit from the training. Lesson learned: No one wants to feel rushed when they’re learning something new!

Now for my field visits with volunteers: I observed three groups as they tested the waters on their own, and I was definitely impressed by how efficiently and cohesively the volunteers worked together! Minor hiccups occasionally arose as questions over small details emerged or a site-specific issue popped up now and again, but for the most part everyone seemed confident and well prepared.

It was very inspiring for me to watch these volunteers work with the protocol on their own, make sense of it, and develop systems that worked for them! I was impressed with the teamwork of one group in particular; each volunteer had his or her own role (ie. data collector, trap retriever, processor of contents, etc.), while all team members seized the opportunity to assist one another by verifying a species ID or helping complete a given task, which made for very efficient and cooperative teamwork. The care and thought that volunteers put into it was very inspiring, especially when one considers how important citizen science is in our response to environmental issues. Getting out in the field and participating is a critical part of the process.

When asked to reflect on the strengths of the program, the majority of volunteers emphasized the quality of communication with Crab Team staff, the thorough reference materials (identification guide and protocol) provided to them, and the importance of having multiple meetings with Crab Team staff. That said, volunteers were generally very positive about the program, which bodes well for the next sampling season.

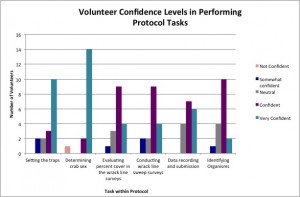

Now, what about that online survey? By the time volunteers took the survey, they had more experience and practice sampling, so it’s not entirely surprising that the survey generated very positive results. Notably, most volunteers were either “confident” or “very confident” with all parts of the protocol. This is definitely encouraging and suggests that the Crab Team is doing a good job getting volunteers ready to sample.

Self-reported volunteer confidence, by sampling task, after completion of training, site visit, and 2 months of sampling.

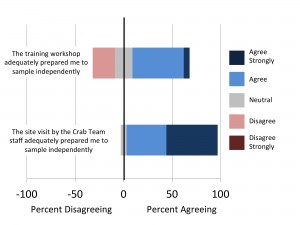

A big part of that preparation likely has to do with the site visits held in August. Although volunteers found the initial training session helpful, when asked to rate how prepared to sample there were after the workshop versus the site visit, the majority of volunteers sided with the site visit. Having that additional, individualized, meeting with a staff member at their actual site is invaluable! Rather than trying to troubleshoot site-specific issues alone, volunteers had a staff member there to walk them through it.

Likert responses to how prepared to sample independently volunteers felt following the training workshop, and, subsequently, following the site visits with Crab Team staff.

Because the program is so new, I wasn’t able to assess the relationship between volunteer preparation and data quality. Nevertheless, I believe that if volunteers are well prepared and are enjoying their time with the program, they are more likely to collect high quality data and will, hopefully, stick around for quite a while!

I therefore recommend that the Crab Team:

- increases the length of the initial training session,

- maintains strong communication between program staff and volunteers, and

- continues this evaluation process.

These steps will not only increase the appeal of this program for volunteers but will also address some of those lingering challenges with citizen science. Returning and incoming volunteers are sure to love participating in this awesome new citizen science program!

Click here to read how Crab Team has responded

Return to News page.

Return to Crab Team home page.

Follow @WAGreenCrab

FEB

2016